First Seen

2025-08-07T13:34:30+00:00

simple-description (qwen3.5_2b-q8_0)

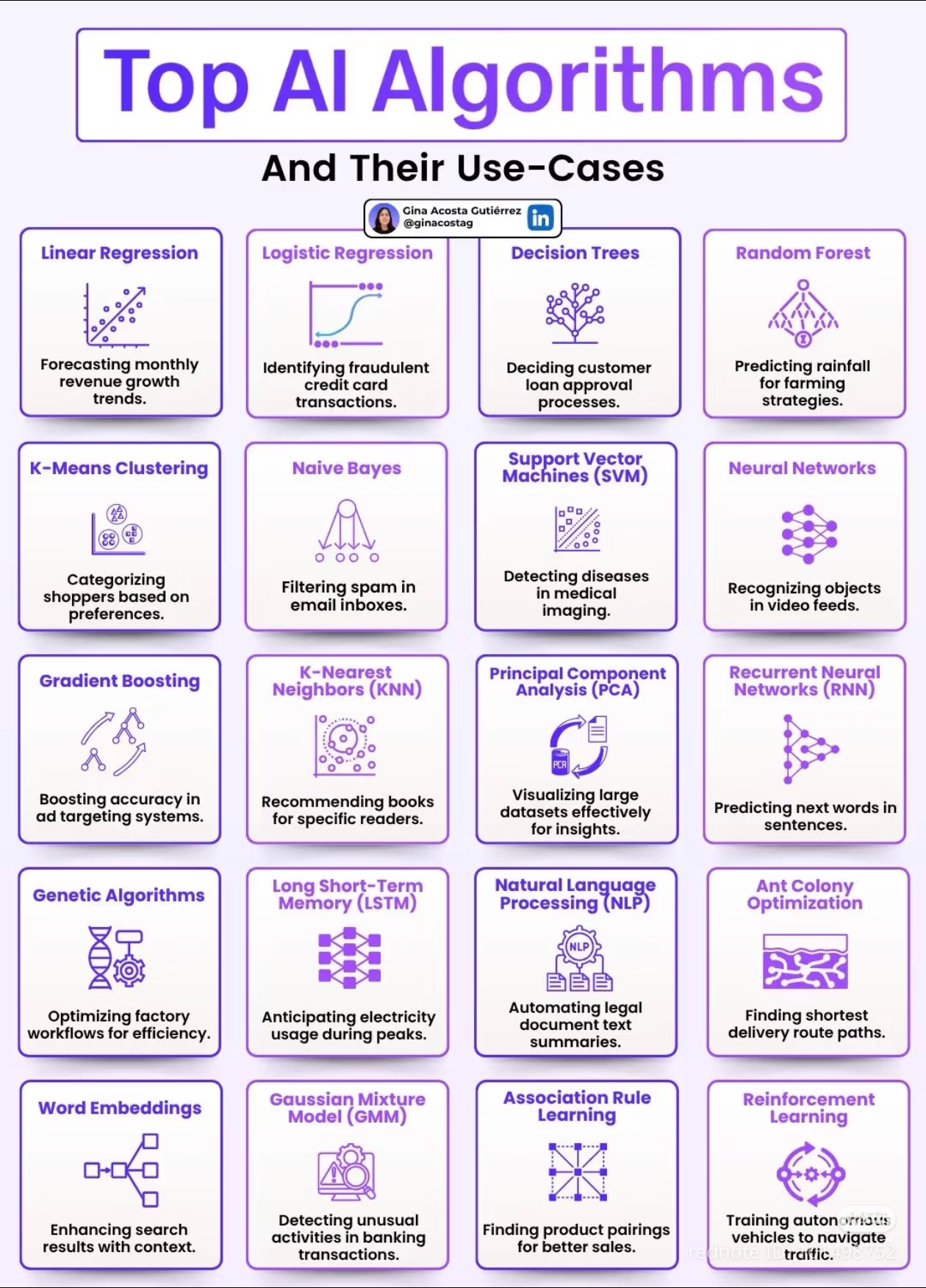

This infographic translates dense machine learning jargon into simple visual aids, serving as an educational guide for Artificial Intelligence. It explains that terms like "Logistic Regression" are actually methods used to detect fraud, while "K-Means Clustering" is a technique for grouping shoppers based on their preferences.

detailed-analysis (gemma3_27b-it-q8_0)

Okay, let's dissect this infographic ("Top AI Algorithms and Their Use-Cases") through the lens of Artificial Intelligence, including applying several theoretical frameworks where they're relevant.

Visual Description

The infographic presents a grid of 20 common Machine Learning (ML) and Artificial Intelligence (AI) algorithms. Each algorithm is represented by a colorful, minimalist graphic alongside a concise description of a practical application.

- Layout: The grid arrangement suggests a categorization, but isn't strictly hierarchical. It's more of a 'catalog' of tools.

- Color Scheme: The use of varied, bright colors is visually appealing and perhaps intended to convey the diversity and dynamism of the field.

- Text: Concise application descriptions paired with the algorithm names. The style is very accessible – aimed at someone wanting a quick overview.

- Overall Impression: It's an introductory graphic, aiming to demystify AI by showing concrete examples. The emphasis is on application rather than deep technical detail. It's a promotional piece by Gina Costig, a LinkedIn personality, and therefore presents a positive and simplified view.

Critical Theory

From a Critical Theory perspective, the infographic highlights a crucial element of AI’s current state: its instrumental rationality. Critical Theory (particularly in the Frankfurt School tradition) critiques the prioritization of efficiency and functionality over ethical considerations and human emancipation. This infographic embodies that prioritization.

- Focus on Utility: Every entry is about what an algorithm does – how it can increase revenue, detect fraud, optimize processes. There's no mention of potential harms, biases, or the societal implications of these applications. It frames AI as a purely technical solution to problems, obscuring the power dynamics at play.

- Technological Determinism: The infographic subtly promotes the idea that these technologies are inevitable and inherently beneficial. It implies that if you have a problem (like predicting rainfall or detecting fraud), of course you should turn to an AI algorithm. This downplays the role of social, political, and economic factors that shape those problems in the first place.

- Commodification of Intelligence: The entire premise of using algorithms to "optimize" or "boost" things aligns with a broader trend of commodifying intelligence and reducing complex human activities to quantifiable metrics.

Marxist Conflict Theory

A Marxist lens immediately focuses on the power relations embedded in the development and deployment of these AI algorithms.

- Capital Accumulation: Many of the applications described directly contribute to capital accumulation. "Forecasting monthly revenue," "detecting fraudulent transactions" (protecting capital), "optimizing factory workflows" (increasing productivity and profit) are all geared towards boosting the financial bottom line for those who own the means of production.

- Labor Displacement: While not explicitly mentioned, the implicit promise of these algorithms is often labor reduction. Automating document summaries, optimizing logistics, or predicting customer behavior could all lead to job losses. The graphic doesn’t address the impact on the working class.

- Concentration of Power: The development and control of these algorithms are concentrated in the hands of large tech companies and wealthy institutions. This reinforces existing power imbalances. The infographic does not acknowledge this uneven distribution of technological power.

- The Algorithm as Managerial Tool: Many of the applications—loan approvals, categorizing shoppers, recognizing objects in videos—suggest algorithms are becoming a new form of managerial control, dictating outcomes and making decisions that previously involved human judgement.

Postmodernism

A postmodern reading would deconstruct the infographic's claim to objective knowledge about “Top AI Algorithms.”

- The Illusion of a Singular Truth: The title suggests a definitive ranking or categorization. Postmodernism would argue that there is no single "top" – that value judgments are always contextual and subjective.

- Discourse and Representation: The infographic constructs a particular narrative about AI – one that is positive, functional, and application-driven. It doesn't represent the full complexity of the field.

- Simulacra and Simulation: The glossy, simplified graphics can be seen as "simulacra" – copies without originals. They create an image of AI that might be divorced from the messy reality of its development and implementation.

- Fragmentation and Plurality: The grid layout, while visually pleasing, could be interpreted as reflecting the fragmented and rapidly evolving nature of the AI landscape. There’s no grand unified theory here, just a collection of tools.

Foucauldian Genealogical Discourse Analysis

Applying Foucault, we can examine the historical discourses that have shaped the development and presentation of these algorithms.

- Power/Knowledge: Foucault argued that power and knowledge are inextricably linked. This infographic exemplifies that. The ability to define what constitutes "top" AI algorithms grants power to the creator (Gina Costig) and reinforces particular ways of thinking about AI.

- Disciplinary Power: The focus on optimization and efficiency can be seen as a form of "disciplinary power"—shaping individuals and processes to conform to quantifiable norms. Algorithms are often used to monitor and control behavior.

- The Historical Construction of "Intelligence": The very idea of "artificial intelligence" is not natural or inevitable. It’s a historically constructed concept, evolving alongside changing technological capabilities and societal beliefs. Foucault would ask how this concept of "intelligence" has been used to justify certain forms of power.

Queer Feminist Intersectional Analysis

This is the area where the infographic is most lacking and where a critical analysis becomes especially important.

- Bias and Exclusion: AI algorithms are notoriously prone to reflecting and amplifying existing societal biases. Many of these algorithms (e.g., loan approvals, fraud detection, even seemingly neutral ones like recommendation systems) have the potential to discriminate against marginalized groups. The infographic doesn’t acknowledge this issue at all.

- Gendered and Racialized Data: The data used to train these algorithms is often biased, reflecting historical inequalities. This can lead to discriminatory outcomes based on gender, race, class, and other factors.

- Lack of Representation: The tech industry, and specifically AI development, is notoriously lacking in diversity. This reinforces the risk of biased algorithms and the exclusion of different perspectives.

- The Normalization of Surveillance: Some applications (like recognizing objects in video feeds) raise concerns about surveillance and the erosion of privacy, which disproportionately impacts marginalized communities.

In conclusion:

The infographic, while seemingly neutral, is deeply embedded in a network of power relations, historical discourses, and ideological assumptions. A critical examination reveals that it presents a sanitized and incomplete picture of AI, focusing on its potential benefits while obscuring its potential harms. Applying these theoretical frameworks demonstrates that AI is not simply a set of technical tools but a complex social, political, and economic force with far-reaching consequences.

simple-description (llama3.2-vision_11b)

The meme is a humorous list of "Top AI Algorithms" with their "use cases" or "use cases" being ridiculous or humorous. The list includes algorithms like "Linear Regression" with a use case of "Forecasting monthly revenue growth" and "Neural Networks" with a use case of "Recognizing objects in video feeds". The meme pokes fun at the idea of using AI algorithms for mundane tasks like "Forecasting monthly revenue growth" or "Recognizing objects in video feeds" as a "use case".