First Seen

2025-07-29T18:29:41+00:00

simple-description (qwen3.5_2b-q8_0)

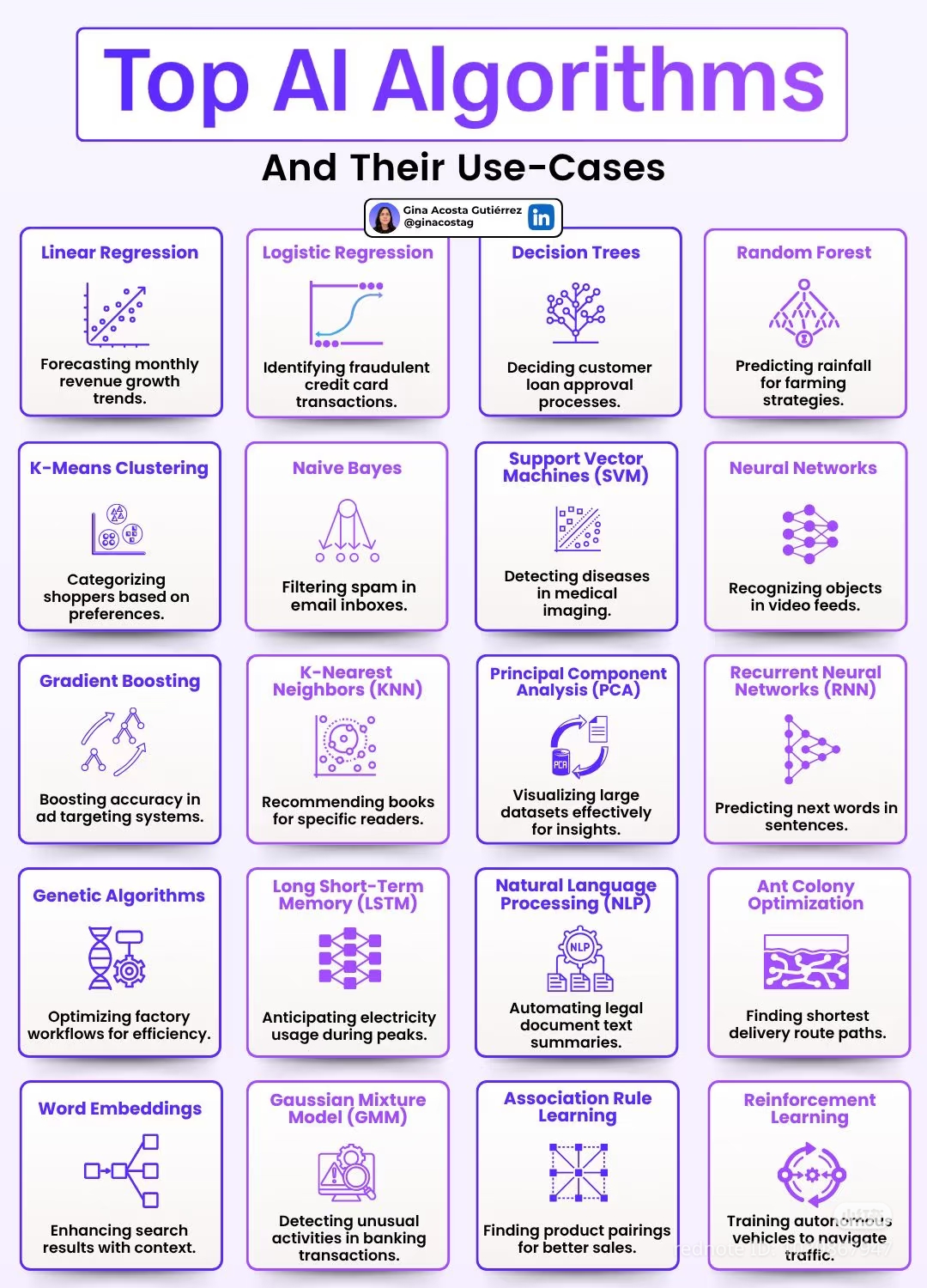

This infographic serves as a visual guide for Artificial Intelligence algorithms, explaining how they process data to solve real-world problems like fraud detection or farming strategies. It lists specific tools such as "Linear Regression" (for forecasting revenue) and shows icons that help users visualize how these computers connect and make decisions. Essentially, it breaks down technical jargon into everyday terms so technology is accessible to everyone.

detailed-analysis (gemma3_27b-it-q8_0)

Okay, let's break down this infographic, "Top AI Algorithms and Their Use-Cases," through the lens of Artificial Intelligence as its core category, incorporating the requested theoretical frameworks where applicable.

Visual Description

The infographic is a grid-style visual presenting 20 different AI algorithms. Each algorithm is represented by a graphic icon and accompanied by a concise description of a typical use case. The algorithms are arranged in a 4x5 layout. The overall aesthetic is clean and modern, using a pastel color palette. The arrangement doesn't appear to be strictly based on relationships between algorithms, but rather seems designed to showcase breadth, grouping algorithms with a somewhat similar level of complexity or broad application. The top row focuses on more statistically based algorithms (Regression, Decision Trees) and the bottom row on more modern/complex neural network approaches. The presence of a LinkedIn profile ("Gina Acosta Gutierrez") suggests it's meant for a professional audience, likely those in data science or technology seeking a quick overview.

Foucauldian Genealogical Discourse Analysis

From a Foucauldian perspective, this infographic is a fascinating example of how "AI" has become a discourse – a system of thought, language, and practices that shapes our understanding of the world.

- Power/Knowledge: The infographic doesn't simply present AI algorithms; it constructs them as "top" algorithms. This implicitly establishes a hierarchy, a regime of truth about which approaches are most valuable. The selection itself is an exercise of power, as it dictates which algorithms are deemed worthy of attention and dissemination. It reinforces the idea that these algorithms are “essential” knowledge for anyone working in the field, contributing to the expertise and authority of those who design and deploy them.

- Genealogy of Algorithms: The infographic elides the historical development of these algorithms. It doesn't show how they evolved from earlier techniques (e.g., how Logistic Regression builds on earlier statistical models). A genealogical analysis would unpack the contingencies, the accidents, and the struggles that led to the current prominence of these particular techniques. It would reveal the human decisions that shaped their evolution, and how past applications (often in military or surveillance contexts) have influenced their current forms.

- Normalization: By presenting these algorithms as readily usable tools for specific tasks (fraud detection, loan approvals, targeting ads), the infographic normalizes their application in various domains. This normalization risks obscuring the potential ethical implications and biases embedded within these systems.

Critical Theory

Applying Critical Theory, we can examine how the infographic reflects underlying social and political structures.

- Instrumental Rationality: The focus on "use-cases" embodies Max Horkheimer and Theodor Adorno’s concept of instrumental rationality. The algorithms are presented solely based on their utility, their ability to achieve specific goals (e.g., increase revenue, optimize efficiency). This prioritizes means over ends, and potentially ignores broader questions about the societal impact of these technologies.

- Reification: The algorithms themselves are reified – treated as objective, self-contained entities divorced from the social contexts in which they are created and deployed. The infographic doesn't acknowledge the role of human biases in the data used to train these algorithms or in the choices made during their development.

- Domination through Technology: The use cases presented often involve surveillance, control, or manipulation. (fraud detection, spam filtering, ad targeting). This aligns with Critical Theory’s concern that technology can be used to reinforce existing power structures and dominate individuals.

Marxist Conflict Theory

From a Marxist perspective, the infographic highlights the relationship between technological development and the capitalist mode of production.

- Forces and Relations of Production: The AI algorithms represent the “forces of production” – the technologies used to increase efficiency and productivity. The "use-cases" reflect the “relations of production” – how these technologies are used to maintain and enhance capital accumulation. For example, optimizing factory workflows (Genetic Algorithms) and predicting electricity usage (LSTM) directly contribute to increasing profit margins for businesses.

- Commodification of Intelligence: The infographic implicitly commodifies intelligence. Algorithms, which can be seen as codified forms of human intelligence, are presented as tools for extracting value. This commodification reinforces the alienation of labor, as humans become increasingly reliant on algorithms to perform tasks that were once done by human workers.

- Class Struggle: The use of AI for tasks like loan approval (Decision Trees) or fraud detection (Logistic Regression) can exacerbate existing class inequalities. Biases in the data used to train these algorithms may lead to discriminatory outcomes, disproportionately affecting marginalized communities.

Postmodernism

A postmodern lens would deconstruct the idea of a "top" AI algorithm.

- Rejection of Grand Narratives: The infographic implies there's a clear progression or hierarchy of AI algorithms. Postmodernism challenges this notion, arguing that there is no single, objective truth about which algorithms are best. Different algorithms are appropriate for different contexts, and their effectiveness is always relative.

- Deconstruction of Meaning: The infographic presents algorithms with neat, defined “use-cases.” A postmodern analysis would question the stability of these meanings. An algorithm can be repurposed, its function can shift, and its impact can be unpredictable. The very idea of a fixed “use-case” is an illusion.

- Simulacra and Simulation: The presentation of these algorithms, their icons and simplified descriptions, can be seen as a simulacrum – a copy without an original. The infographic doesn’t convey the complex mathematical foundations or the challenges of implementing these algorithms; it offers a simplified representation that may bear little resemblance to the real thing.

Queer Feminist Intersectional Analysis

While perhaps less direct than the other frameworks, a Queer Feminist Intersectional Analysis reveals potential biases and power dynamics embedded within the infographic.

- Algorithmic Bias and Exclusion: The use cases disproportionately focus on applications that benefit existing power structures (finance, marketing, industrial optimization). There's a lack of representation for applications that might address issues of social justice, gender equity, or LGBTQ+ rights. This reinforces the idea that AI is primarily a tool for maintaining the status quo.

- Data and Representation: The effectiveness of these algorithms depends on the data they are trained on. If the data reflects existing societal biases (e.g., gender stereotypes, racial discrimination), the algorithms will perpetuate and amplify those biases.

- Absence of Care and Relationality: The emphasis on optimization and efficiency ignores the importance of care, empathy, and relationality. The focus on technological solutions often obscures the need for human intervention and ethical considerations.

In conclusion, the infographic isn't just a neutral presentation of AI algorithms. It's a culturally situated artifact that reflects and reinforces specific power dynamics, ideologies, and ways of knowing. Applying these theoretical frameworks reveals the hidden assumptions and potential consequences of this seemingly objective representation of artificial intelligence.

simple-description (llama3.2-vision_11b)

The meme is a humorous list of the "Top 20 AI Algorithms and Their Use Cases" that are actually just a list of popular AI algorithms with humorous and absurd use cases. The image is a list of 20 boxes, each with a title of an AI algorithm and a description of a ridiculous use case for it. For example, "Linear Regression" is used to "Forecasting monthly revenue growth trends" and "K-Means Clustering" is used to "Categorizing shoppers based on preferences". The image is meant to be humorous and satirical, poking fun at the idea that these AI algorithms have such absurd and ridiculous use cases.