First Seen

2025-08-07T04:48:04+00:00

simple-description (qwen3.5_2b-q8_0)

This infographic uses a stylized, colorful snake shape to create a visual timeline of major artificial intelligence milestones, essentially acting as an AI-generated news ticker. The "meme" aspect lies in its absurdity; it takes technical innovations like the Mamba attention mechanism (linking them to Nvidia and AI21 Labs) and compares them to real-world events like NBA basketball games or university conferences. By connecting specific model launches (such as Microsoft's Phi-4-mini-flash-reasoning or Tencent's Hunyuan TurboS) to sports and industry trends, it humorously suggests that even the most complex scientific breakthroughs in AI are just another trend in human culture, culminating in hybrid models like the "Mamba-Llama" created by together.ai.

detailed-analysis (gemma3_27b-it-q8_0)

Okay, let's break down this image – a timeline charting the rise of "hybrid" Large Language Models (LLMs) – through various theoretical lenses relevant to Artificial Intelligence, as requested. I will only use the sections where the theory applies.

Visual Description

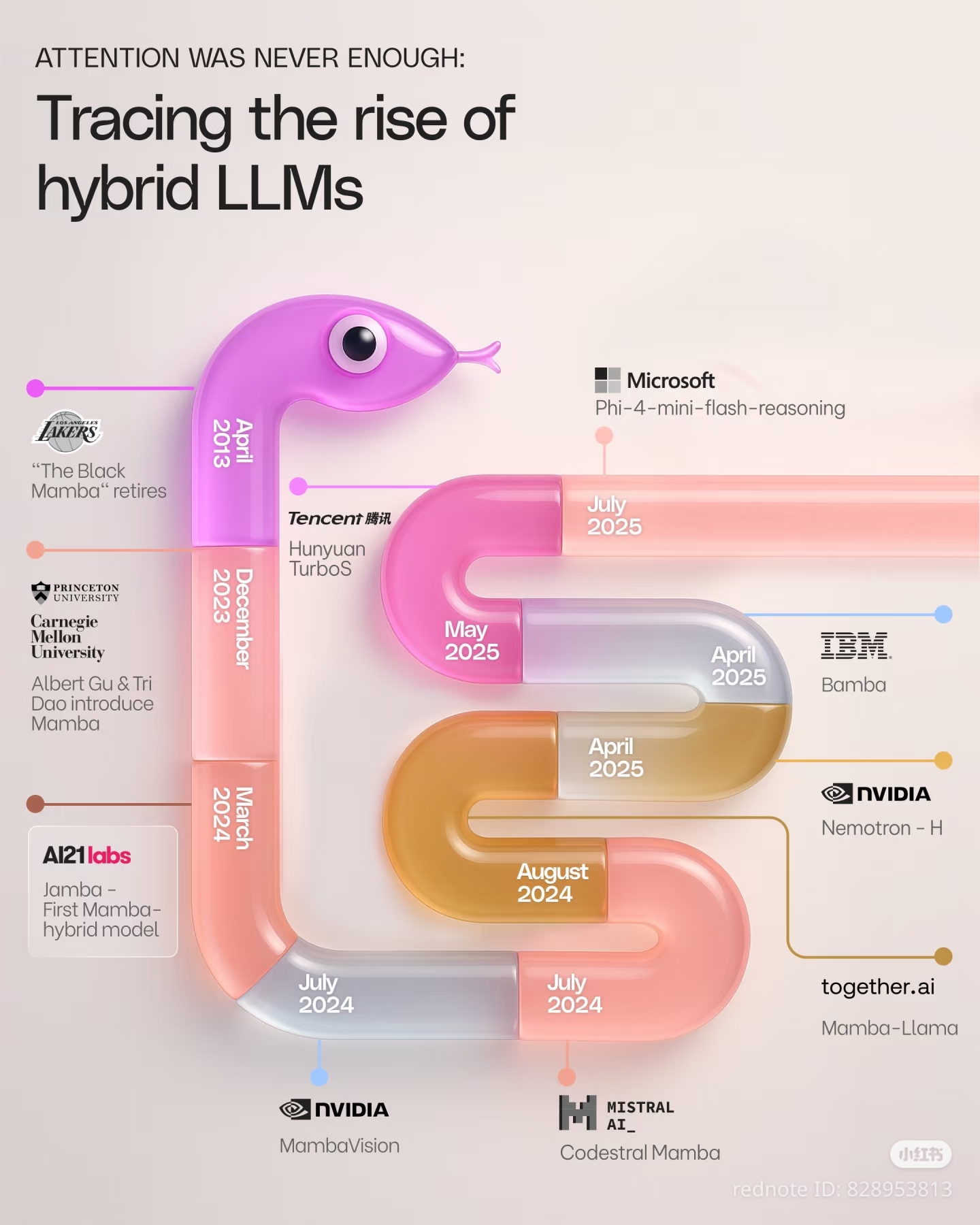

The image is a winding, snake-like timeline, playfully labeled "Attention Was Never Enough: Tracing the Rise of Hybrid LLMs." The snake metaphor is central, and the head is a rendered, cartoonish eye representing a "Mamba" model. The timeline maps key developments in hybrid LLM architectures, starting in 2023 and projecting into 2025.

The snake’s body (the timeline) shows a series of nodes representing specific models/releases (e.g., "The Black Mamba" retiring, Jamba, Bamba, Phi-3, etc.). Each node includes the developing organization (e.g., Microsoft, NVIDIA, Tencent) and the date of release/development. The color palette uses muted shades of purple, pink, and gray. The overall aesthetic is playful and deliberately avoids the stark, technological look often associated with AI visualizations. The "rednote ID" at the bottom suggests that this image comes from an academic setting or research project.

Foucauldian Genealogical Discourse Analysis

This image is, fundamentally, a discourse about the evolution of LLM architecture. A Foucauldian approach looks at how knowledge/power regimes shape what is considered “progress” in AI.

- Archaeology of Knowledge: Before "hybrid LLMs," the dominant discourse centered almost entirely on the Transformer architecture (and particularly the attention mechanism). The title, "Attention Was Never Enough," signals a disruption of this existing paradigm. The timeline represents a historical excavation revealing the moments where this shift began—the "emergence" of alternatives.

- Genealogy of Power/Knowledge: We can trace the “genealogy” of this architectural shift. The development of Mamba (and its “retirement” of The Black Mamba) represents a moment where alternative architectures, like State Space Models (SSMs), started to be explored. The subsequent development of numerous "Mamba-inspired" models by various organizations (NVIDIA, Microsoft, Tencent, etc.) indicates a diffusion of power/knowledge. It’s not a single discovery, but a series of explorations and adaptations across multiple actors. This is also a power play: who gets to name the dominant architectural trend (e.g., "Mamba") exerts control over the discourse.

- Disciplinary Power: The timeline itself functions as a disciplinary mechanism. It constructs a "correct" history, a linear progression that establishes what is deemed legitimate development and innovation. Models that don't fit onto this timeline are implicitly marginalized. It enforces norms about what is considered valuable research.

Critical Theory

The image invites consideration of the underlying assumptions driving AI development. Critical Theory asks us to examine the societal impacts of technological advancements.

- Instrumental Reason: The relentless pursuit of architectural improvements—faster, more efficient, more powerful LLMs—can be seen as a manifestation of instrumental reason. The focus is on how to achieve a technical goal (better LLMs) without necessarily questioning why we are pursuing that goal in the first place. The timeline represents a drive toward optimization and efficiency for its own sake.

- Technological Determinism: The image could be interpreted as subtly reinforcing technological determinism. It presents a linear narrative of "progress" as if the development of hybrid LLMs was inevitable. This downplays the role of human choices, social forces, and economic incentives in shaping technological trajectories.

- Commodification of Intelligence: LLMs, and their underlying architectures, are ultimately commercial products. The involvement of large corporations (NVIDIA, Microsoft, Tencent, IBM) highlights the commodification of intelligence and the drive for profit in the AI industry. The "race" to develop better models is fueled by market competition.

Postmodernism

From a postmodern perspective, the image deconstructs the idea of a singular, monolithic "truth" about LLM architectures.

- Rejection of Grand Narratives: The timeline challenges the grand narrative of the Transformer as the sole, ultimate solution. It shows that there are multiple pathways and competing approaches.

- Fragmentation & Simulacra: The numerous "Mamba-inspired" models suggest a proliferation of variations—a fragmentation of the original idea. The “Mamba” concept itself may become a simulacrum—a copy of a copy, losing its connection to any original "essence."

- Intertextuality: The use of the "snake" metaphor (and "The Black Mamba" reference) creates intertextual connections to popular culture, adding layers of meaning and challenging the image’s purely technical presentation.

In conclusion, this image is far more than a simple timeline. It’s a visual artifact that reflects the power dynamics, underlying assumptions, and shifting discourses within the field of Artificial Intelligence. Applying theoretical lenses, as requested, helps us move beyond a purely technical understanding and consider the broader societal implications of these developments.

simple-description (llama3.2-vision_11b)

The meme is a humorous representation of the rapid development of artificial intelligence (AI) and its impact on the world. The image shows a "snake" of AI models, each representing a different AI model that has been released in recent years, with the most recent ones at the "head" of the snake. The text above the image reads "ATTENTION WAS NEVER ENOUGH: Tracing the rise of hybrid LLMs".