First Seen

2025-09-11T16:03:08+00:00

simple-description (qwen3.5_2b-q8_0)

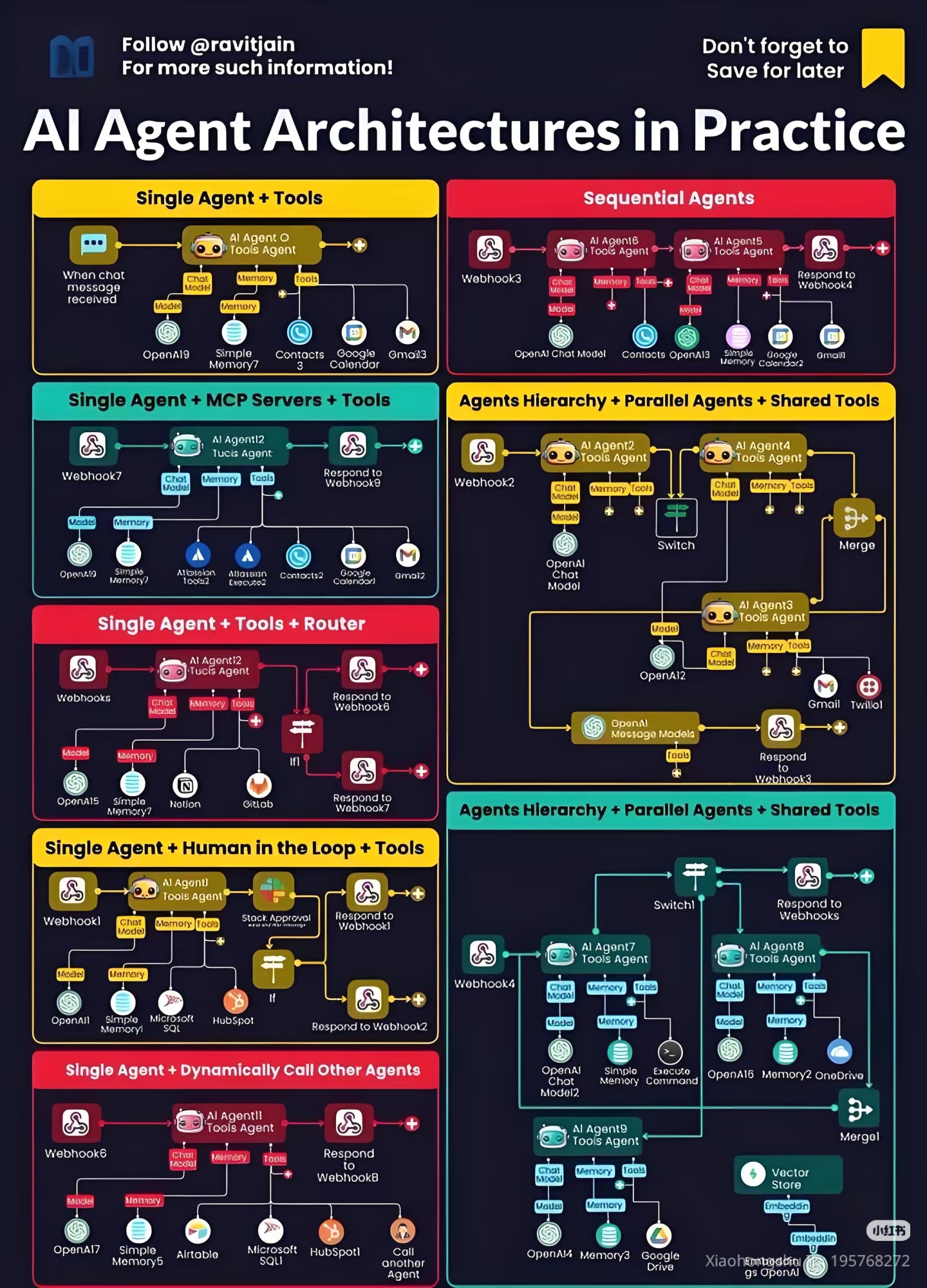

This infographic serves as a technical breakdown for developers, explaining different ways to structure Artificial Intelligence agents. It presents various architectural patterns—ranging from simple single tools to complex hierarchical systems with parallel agents and shared resources—to illustrate best practices in building these AI bots. The central title is "AI Agent Architectures in Practice," which lists specific methods like 'Sequential Agents' and 'MCP Servers + Tools' as examples for their study.

detailed-analysis (gemma3_27b-it-q8_0)

Okay, let's break down this complex diagram of "AI Agent Architectures in Practice" through the lens of Artificial Intelligence, and then explore its implications through a few applicable critical theory frameworks. This is a very dense visualization, so we'll aim for a focused and layered interpretation.

Visual Description

The image presents a series of diagrams illustrating different configurations of "AI Agents" connected to various tools and services. At its core, the visual represents systems trying to mimic human agency through software. Each diagram shows a set of interconnected blocks representing:

- AI Agents: These are the core processing units, often labeled "AI Agent Tools Agent" or “AI Agent 2 Tools Agent.” They act as decision-makers.

- Tools: These are functions or services the agents can access – things like "Chat Model," "Memory," "Google Contacts," "Gmail," "HubSpot," "OpenAI," and "Webhooks." Essentially, the agent's capabilities are defined by the tools it can leverage.

- Connections/Flow: Arrows and lines dictate the direction of data flow and interaction between agents and tools. This shows how information is processed and decisions are made.

- Architectures: The diagrams demonstrate a progressive increase in complexity:

- Single Agent + Tools: Simplest form - one agent interacting with tools.

- Sequential Agents: Agents linked in a chain, passing data sequentially.

- Parallel Agents + Shared Tools: Multiple agents using the same tools concurrently.

- Agent + Human in the Loop: Human intervention/oversight is included.

- Dynamically Call Other Agents: Agents that can trigger/call upon other agents.

- Single Agent + Router Agent: One agent that directs requests to different tools.

- Webhooks: These are prominent throughout the diagram and seem to function as a core connective tissue between agents and services.

The overall aesthetic is reminiscent of a system architecture diagram – the kind you'd find describing a software system. It’s a visualization of a potential intelligent system.

---

Critical Theory

Here's where we can start unpacking the implications. Several critical theory lenses are applicable, but I'll focus on the most pertinent ones.

1. Foucauldian Genealogical Discourse Analysis

Michel Foucault's work on power/knowledge relations and discourse is highly relevant here.

- Discourse of Control: The diagram embodies a discourse of control – an attempt to map, quantify, and manage agency. Each connection, each tool, represents a point of potential observation and manipulation. The pursuit of AI agents is fundamentally an attempt to create systems capable of exercising control.

- Genealogy of Agency: Foucault's genealogy traces the historical evolution of concepts. The idea of “agency” itself is being reconstructed within the AI paradigm. The diagram reveals a shift: agency is no longer inherently human; it’s being engineered, fragmented, and distributed across algorithmic processes. The historical concept of agency is being “disarticulated” and re-assembled in code.

- Panoptic Potential: While not a literal panopticon, the architecture suggests a panoptic potential. The flow of data and the central role of the agents create a system where actions are potentially traceable and analyzable. The monitoring of data flows is intrinsic to this system's functioning.

- Subjectification: How does this architecture shape us? As we increasingly interact with these systems, our own actions become data points within them. This contributes to a process of subjectification where our behavior is modulated by the logic of the algorithm.

2. Marxist Conflict Theory

- Automation & Labor: The core function of many of these agent architectures is automation. From automating email responses (Gmail) to managing customer relations (HubSpot), these agents are designed to displace or modify human labor. This aligns with Marx's analysis of capital's drive to replace human labor with machinery to increase profits.

- The Control of the Means of Computation: The tools and infrastructure represented in the diagram – OpenAI, Google Contacts, etc. – represent the "means of computation." These are increasingly concentrated in the hands of a few powerful tech corporations. This concentration of power raises questions about who controls the technology that shapes our world.

- Alienation: The automated, seemingly “intelligent” systems represented here further alienate humans from the products of their labor. If an AI agent can handle a task, the human's skill and contribution are devalued. This can lead to feelings of powerlessness and disconnect.

- Class Conflict: The benefits of these advancements – increased efficiency, reduced costs – are likely to accrue disproportionately to the owners of capital, potentially exacerbating existing class inequalities.

3. Postmodernism

- The Death of the Author/Agent: The idea of a singular, coherent “agent” is destabilized. Instead, agency is distributed, fragmented, and contingent upon the interactions between various components. The "author" of a decision is no longer a clear entity. This echoes postmodern critiques of authorship and the idea of a unified self.

- Simulacra & Simulation: The AI Agent itself can be seen as a simulacrum – a representation that comes to stand in for the real thing. The agent simulates intelligence and agency, but lacks genuine consciousness or understanding. The image suggests a world increasingly populated by simulations.

- Hyperreality: As these systems become more prevalent, the line between real and simulated interaction blurs. This contributes to a state of hyperreality, where the simulated versions of experiences become more real than the real thing.

---

In conclusion:

This diagram isn’t just about technical architecture; it's a visual representation of a fundamental shift in power, labor, and the very concept of agency. Critical theory frameworks provide us with tools to analyze the potential consequences of these developments and to question the assumptions that underlie the design and deployment of these systems. The image is a prompt for considering how AI agents are not neutral tools, but rather embedded within complex social, political, and economic systems.

Let me know if you'd like me to expand on any of these points or explore additional theoretical perspectives!

simple-description (llama3.2-vision_11b)

The meme is a humorous representation of the complexities of AI development, particularly in the context of AI agents and their interactions with humans. The image is a flowchart that shows the various interactions between AI agents and humans, with each box representing a different type of interaction. The text "Don't forget to save for later" is a humorous comment on the importance of saving data in AI development, with the image serving as a visual representation of the many interactions between AI agents and humans.

The image is a flowchart that shows the various interactions between AI agents and humans, with each box representing a different type of interaction. The text "Don't forget to save for later" is a humorous comment on the importance of saving data in AI development, with the image serving as a visual representation of the many interactions between AI agents and humans.