First Seen

2025-07-30T16:44:31+00:00

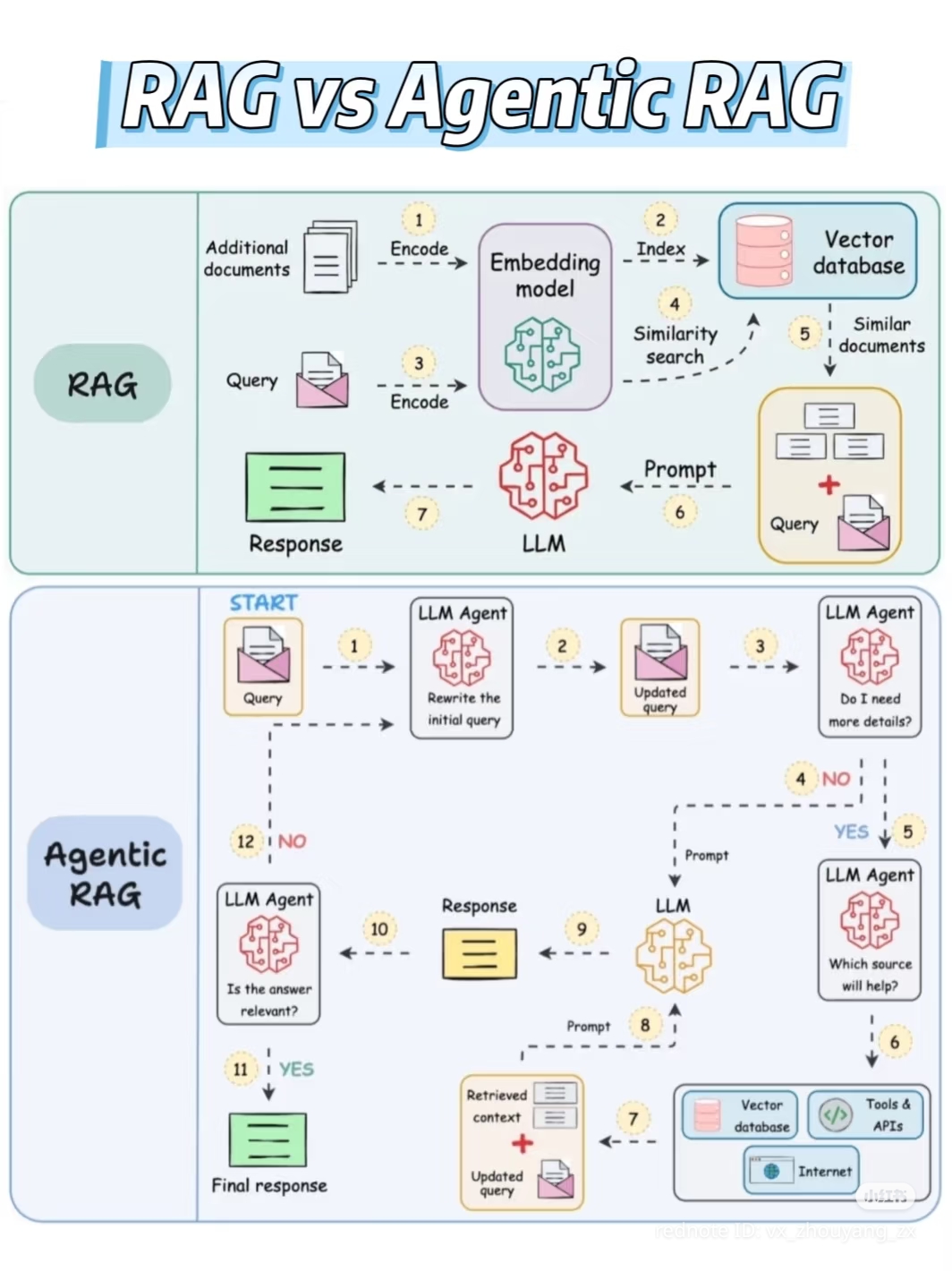

simple-description (qwen3.5_2b-q8_0)

This infographic compares two different approaches in artificial intelligence: simple RAG on the left and more advanced Agentic RAG on the right. The top section shows that traditional RAG is a reactive process where an AI simply looks for similar documents already stored in its memory to answer your query without making decisions. In contrast, the bottom section illustrates Agentic RAG as a proactive system; instead of just reading a paper, a smart agent acts like a helpful assistant to check if it needs more info, search the web or tools, and retrieve everything before forming a final answer.

detailed-analysis (gemma3_27b-it-q8_0)

Okay, let's break down this meme "RAG vs. Agentic RAG" from an Artificial Intelligence perspective, and then apply the requested theoretical lenses where applicable.

Overall Context: This meme illustrates the evolution of Retrieval-Augmented Generation (RAG) in Large Language Models (LLMs). RAG is a technique used to enhance LLMs by grounding their responses in external knowledge sources, improving accuracy and reducing "hallucinations" (generating factually incorrect information). The meme contrasts the original, simpler RAG model with a more sophisticated, "agentic" version.

---

1. Visual Description

The meme is divided into two sections, labeled "RAG" and "Agentic RAG". Both sections are represented as flowcharts.

- RAG (Top Section): A straightforward linear process.

- A user makes a Query.

- The query and additional documents are Encoded into embeddings.

- These embeddings are used for Similarity Search within a Vector Database.

- Similar Documents are retrieved.

- The original Query is combined with the retrieved documents into a Prompt and sent to the LLM.

- The LLM generates a Response.

- Agentic RAG (Bottom Section): A more complex, iterative process involving an LLM Agent at multiple stages.

- A user starts with a Query.

- The LLM Agent rewrites the initial query.

- The agent then asks: “Do I need more details?” branching based on a "YES" or "NO" response.

- If "YES", the agent determines which Tools/APIs would be helpful.

- The agent retrieves data from the Vector Database and the Internet.

- A prompt based on the retrieved information is passed to the LLM.

- The LLM generates a Response.

- The agent then asks: "Is the answer relevant?" branching based on a "YES" or "NO" response.

- If "YES", a final response is generated.

The core difference is that Agentic RAG doesn't simply retrieve information and pass it to the LLM, but actively refines the query, decides what additional information to seek, and evaluates the relevance of the results. It’s more of an intelligent agent acting on behalf of the user.

---

2. Foucauldian Genealogical Discourse Analysis

This meme exposes the shifting discourse around knowledge and intelligence within AI development. The initial RAG model embodies a "truth-claim" that assumes knowledge exists as static data that can be accurately retrieved and presented. The Vector Database is a site of power/knowledge – it organizes and categorizes information, defining what is considered "similar" and therefore relevant. The LLM then acts as a conduit for this pre-defined knowledge.

Agentic RAG, however, demonstrates a shift. It destabilizes the assumption of static, readily-available truth. The LLM Agent questions the initial query ("Do I need more details?"), intervenes in the retrieval process (choosing tools/APIs), and evaluates the output ("Is the answer relevant?"). This is a move towards a more provisional understanding of knowledge, recognizing its context-dependent and potentially incomplete nature.

The genealogy traces the evolution from a system that presented information as knowledge to a system that actively constructs knowledge through iteration and negotiation. The Agent is not simply a tool for retrieval, but an actor within the process of knowledge production.

---

3. Critical Theory

From a Critical Theory perspective, the meme highlights the way AI systems can reinforce existing power structures or, potentially, challenge them.

- RAG as Reinforcement: The basic RAG model could be seen as reinforcing existing biases present in the data stored in the Vector Database. If the database reflects societal inequalities, the LLM's responses will inevitably reflect those same inequalities.

- Agentic RAG as Potential Challenge: Agentic RAG could be seen as offering a pathway to mitigate this. The Agent's ability to seek out information from diverse sources (the Internet, in addition to the vector database) and re-evaluate relevance introduces a level of agency that might disrupt the perpetuation of biases.

However, the agency of the LLM Agent is still designed and controlled by humans. Critical theory would question who controls the algorithms that govern the Agent’s decision-making process and the potential for those algorithms to be designed to serve specific interests.

---

4. Marxist Conflict Theory

The meme reveals a potential shift in the "means of intellectual production."

- Traditional Knowledge Work: In a pre-AI world, humans controlled the entire process of research, analysis, and knowledge dissemination.

- RAG as Automation of Research: RAG represents a partial automation of the research process. The human query initiates the process, but the retrieval of information and its presentation to the LLM can be seen as a form of "deskilling" of the researcher.

- Agentic RAG as (Potentially) Restoring Control: Agentic RAG, with its iterative and evaluative processes, could be interpreted as a partial restoration of human control. The LLM Agent’s actions aren’t simply automating tasks but making decisions, and the human operator can observe and potentially intervene in that process.

However, this is a complex issue. From a Marxist perspective, the ownership and control of the AI infrastructure (the LLM, the Vector Database, the APIs) remain crucial. The benefits of this “restored control” might be unevenly distributed, with those who own the AI technology accruing the most power and profit.

---

5. Postmodernism

The meme directly engages with postmodern themes of fragmentation, deconstruction, and the rejection of grand narratives.

- Deconstruction of "Truth": The move from a simple RAG to Agentic RAG deconstructs the notion of a single, authoritative "truth." The Agentic system acknowledges that knowledge is contingent, contextual, and constantly being revised.

- Emphasis on Process: The flowchart format of the meme emphasizes process over product. It’s not about the final answer, but about the iterative journey of inquiry.

- Rejecting the Meta-Narrative: The traditional view of "knowledge" as something fixed and readily available is challenged. Agentic RAG suggests that knowledge is not found, but constructed through dialogue and interaction.

---

6. Queer Feminist Intersectional Analysis

This lens would examine how power dynamics are embedded within the design and implementation of these systems and how they impact marginalized groups.

- Bias in Data: The Vector Database likely contains biases reflecting historical and ongoing social inequalities (gender, race, sexuality, ability, etc.). These biases are amplified in the RAG model.

- Agent as Masked Privilege: The "Agency" of the LLM Agent is a designed function, and it’s important to ask: Whose agency is being represented? Is the Agent programmed to prioritize the perspectives of dominant groups?

- Intersectional Queries: Agentic RAG could potentially be used to address intersectional queries that are often overlooked by simpler systems. The Agent's ability to seek out additional information might allow it to provide more nuanced and responsive answers to complex questions about marginalized experiences.

However, this potential is contingent on careful design and attention to inclusivity. Without a deliberate effort to address bias and promote equity, Agentic RAG could simply reinforce existing patterns of discrimination.

In conclusion, this meme is not simply a technical illustration. It's a snapshot of the evolving landscape of AI and the complex philosophical and social implications of these advancements. Applying these theoretical lenses reveals the meme's resonance with broader debates about knowledge, power, and the future of intelligence.

simple-description (llama3.2-vision_11b)

The meme is a humorous comparison between two AI-powered chatbots, RAG (Recurrent Augmented Generation) and AGENTIC RAG (a more advanced version of RAG). The meme suggests that AGENTIC RAG is more "intelligent" and capable of producing more accurate and informative responses, while RAG is more "dumb" and only capable of generating basic responses. The meme is likely to be humorous and satirical, poking fun at the idea that even the most advanced AI systems can have their limitations.