First Seen

2023-12-18T06:47:43+00:00

simple-description (llama3.2-vision_11b)

This meme is a humorous commentary on the limitations of AI in understanding human context. The image shows a chart comparing the performance of AI models, with "Llama 2" (a large language model) and "Mistral" (a state-of-the-art language model) on various tasks. The chart's x-axis represents the model size in billions of parameters, and the y-axis shows the performance on tasks like understanding context, reasoning, and knowledge. The text overlay reads "Llama 2 (LLaMA 2.0) is still a large language model, not all of them are created equal"

detailed-analysis (gemma3-27b-vision)

Okay, here's an analysis of the provided graphs, broken down into sections addressing the requested theoretical frameworks. It's important to note that applying such complex theories to graphs of machine learning model performance is inherently interpretative and often metaphorical. I will try to be clear about where I'm stretching the frameworks to fit, and where the connection is more directly viable.

1. Visual Description

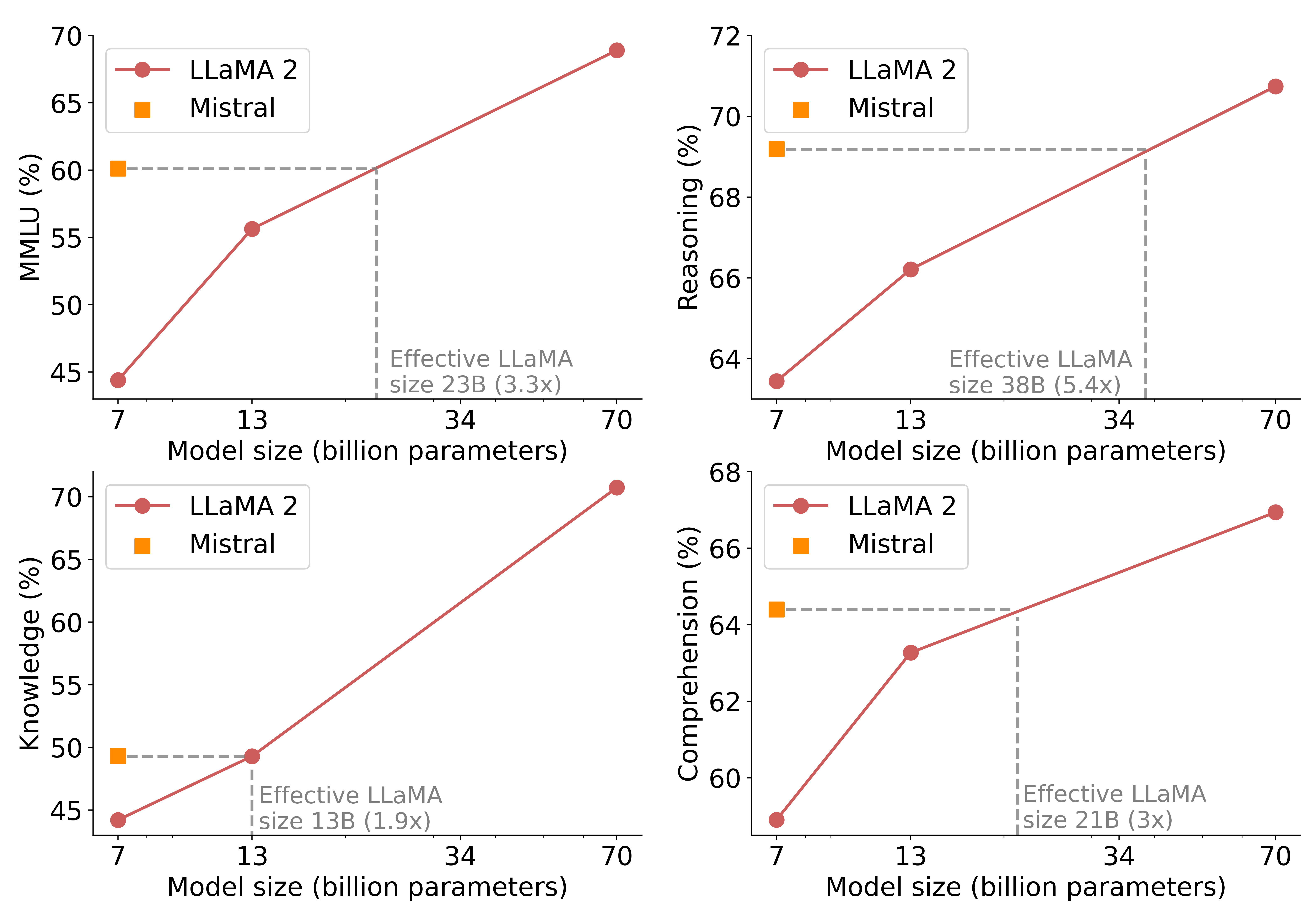

The image comprises four line graphs, each depicting the performance of two Large Language Models (LLMs), LLaMA 2 and Mistral, across different metrics. The x-axis of each graph represents model size in billions of parameters (ranging from approximately 7 to 70), while the y-axis represents performance as a percentage score (ranging from approximately 45% to 70%).

* Metrics: The four graphs showcase different aspects of model performance: MMLU (likely a multi-task language understanding benchmark), Reasoning, Knowledge, and Comprehension.

* Trends: In each graph, both models show a positive correlation between model size and performance. Larger models generally achieve higher scores. However, LLaMA 2 consistently outperforms Mistral across all metrics and model sizes.

* Vertical Dashed Lines: Each graph includes a vertical dashed line indicating an 'effective' LLaMA size (13B, 23B, 38B, 21B) with a multiplier (1.9x, 3.3x, 5.4x, 3x). This suggests a comparison point or a threshold where the relative performance difference is highlighted.

* Line Colors: LLaMA 2 is represented by orange lines, while Mistral is represented by pink lines. This visual distinction helps track performance across the graphs.

2. Foucauldian Genealogical Discourse Analysis

This framework examines how knowledge and power are intertwined. In this context, we can see the graph as reflecting a discourse about intelligence – specifically, artificial intelligence – and the power dynamics embedded within it.

Episteme & Truth: The graphs present a particular “truth” – that larger models (more parameters) are better models. This aligns with the current episteme (the underlying framework of thought) surrounding AI development – a relentless pursuit of scale. The graph doesn't prove this is the only or best way to build AI, but it naturalizes* it by presenting it as data.

* Power/Knowledge: The graph reinforces the power of those who control the resources to build and train these large models. The metrics themselves (MMLU, Reasoning, Knowledge, Comprehension) are defined by those in positions of power (researchers, corporations), framing what counts as "intelligence" and defining the terms of evaluation. The data validates the continued investment in scaling models.

* Genealogy: A genealogical analysis would trace the historical development of these metrics and the assumptions behind them. How did these specific measures of "intelligence" come to be prioritized? What alternative metrics were overlooked? The graph represents a single point in a longer, more complex history.

3. Critical Theory

Critical Theory is concerned with uncovering hidden power structures and challenging dominant ideologies.

Technological Determinism: The graph subtly reinforces technological determinism – the idea that technology shapes society. The implication is that progress in AI is inevitable and driven by technical advancements (increasing model size). Critical Theory would question this, arguing that technology is shaped by* social, political, and economic forces. The data doesn’t show the impact on society, rather the impact of resources in making the technology.

* Rationalization & Standardization: The quantification of "intelligence" (through percentages) is a form of rationalization and standardization. It reduces complex cognitive abilities to measurable numbers. Critical Theory might critique this as dehumanizing or overly simplistic, obscuring the qualitative aspects of intelligence.

* Ideology: The graph contributes to the ideology of "bigger is better" in AI. This ideology justifies massive investments in computing infrastructure and data collection, potentially at the expense of other approaches to AI development.

4. Marxist Conflict Theory

While seemingly less directly applicable, Marxist Conflict Theory can be brought to bear on this graph by focusing on the competition for resources and the resulting class dynamics.

* Means of Production: The capacity to build and train these large language models represents a significant "means of production" in the emerging AI landscape. The graph highlights the advantage held by those who control these resources (computing power, data, funding).

* Class Struggle (in AI): We can interpret the competition between LLaMA 2 and Mistral as a microcosm of a larger struggle for dominance in the AI field. LLaMA 2’s consistent outperformance suggests it holds a significant advantage, akin to a dominant class.

* Concentration of Capital: The graph implicitly demonstrates the concentration of capital required to build and maintain these models. Only organizations with substantial resources can compete at this level.

5. Postmodernism

Postmodernism challenges grand narratives and emphasizes the subjectivity of knowledge.

Deconstruction: A postmodern reading could "deconstruct" the graph by questioning the validity and neutrality of the metrics used. What does "comprehension" even mean* in the context of an AI? The graph presents these metrics as objective, but they are, in fact, social constructs.

Simulacra and Simulation: The graph itself is a simulation of intelligence, a representation that may bear little resemblance to true cognitive ability. The scores are simulacra* – copies without an original.

* Rejection of Metanarratives: The graph reinforces the metanarrative of technological progress. Postmodernism would challenge this, arguing that there is no single path to progress, and that different approaches to AI may be equally valid.

6. Queer Feminist Intersectional Analysis

This is the most challenging framework to apply directly, but we can consider it through the lens of representation and bias.

Data Bias: The performance of these models is inherently shaped by the data they are trained on. If the training data reflects existing societal biases (e.g., gender, race, class), the models will likely perpetuate those biases. The graph doesn't reveal anything about the content* of the training data, but it’s essential to acknowledge this potential issue.

* Representation & Power: The metrics used to evaluate the models may not adequately capture the complexities of human intelligence, particularly as experienced by marginalized groups. The graph reinforces a narrow definition of intelligence that may exclude alternative ways of knowing and understanding the world.

* Intersectional Considerations: The performance of these models may vary across different demographic groups. Further analysis is needed to determine whether the models exhibit bias in their responses to questions or tasks related to gender, race, sexuality, or other social categories.

Important Disclaimer: These are interpretations, applying complex theoretical frameworks to a relatively simple dataset. The connections are often metaphorical and require significant extrapolation. The primary purpose is to illustrate how these frameworks can be used to critically analyze even seemingly technical data.

tesseract-ocr

70 72 —e— LLaMA 2 —e— LLaMA 2 65) m= Mistral 70, | Mistral ~ oN m----------------------- S© 604) Berm m rrr rm mmm s5 — : = : 2 68 2 = 3 < 1 = 2° 2 3 : = 1 © 66 : 50 ! oc : AS ; Effective LLaMA 64 Effective LLaMA | | size 23B (3.3x) size 38B (5.4x) | 7 13 34 70 7 13 34 70 Model size (billion parameters) 68 Model size (billion parameters) 70) —e— LLaMA 2 —e LLaMA 2 65) = Mistral 661 m= Mistral = c me O g--------------- 5 60 a 64 . © v , ~ I = 55 OY 62 I S 2. . 250} — c : I UL 60 : | ;Effective LLaMA \Effective LLaMA 45 isize 13B (1.9x) size 21B (3x) 7 13 34 70 7 13 34 70 Model size (billion parameters) Model size (billion parameters)

simple-description (llama3.2-vision)

The meme is an image of a graph showing the performance of a language model (LLaMA 2) on various tasks compared to a previous model (LLaMA). The tasks are listed on the y-axis, with the x-axis representing the model's size in billions of parameters. The graph shows that LLaMA 2 outperforms the previous model in all tasks, with the largest improvements in "Reasoning" and "Comprehension".