First Seen

2026-03-29T20:07:24+00:00

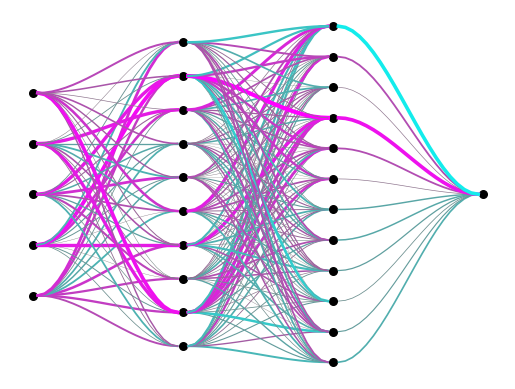

simple-description (qwen3.5_2b-q8_0)

This image illustrates a feedforward neural network, which is the fundamental architectural style used in modern Artificial Intelligence and Machine Learning. On the left, raw data points are fed into layers of nodes that act as processing units, breaking complex information down into manageable parts. In the middle, a dense web of connections represents the complex mathematical calculations and weights that allow the AI to "reason" through relationships between these inputs. Finally, the processed information travels toward a single output node on the right, mimicking how a system generates a final result or decision based on the data it has received.

detailed-analysis (gemma3_27b-it-q8_0)

Okay, let's break down this image within the context of Artificial Intelligence, utilizing the requested theoretical lenses where applicable.

Visual Description

The image depicts a complex network of interconnected nodes (black circles) linked by lines of varying colors (predominantly magenta and teal). The network has a distinct layered structure. There's a clear input layer (on the left), a series of hidden layers in the middle, and an output layer (on the right) consisting of only one node. The lines represent connections between nodes, indicating the flow of information. The density of connections is extremely high, especially in the central layers, creating a visually chaotic but structured pattern. The overall shape is elongated and somewhat resembles a distorted hourglass or a neural network visualization.

Critical Theory

From a critical theory perspective, the image serves as a potent metaphor for the "black box" problem inherent in many AI systems, particularly deep learning. These systems, like the depicted network, are often incredibly complex. The connections and weighting between nodes are learned through training data and algorithms, but are often opaque and difficult to interpret even by their creators.

- Obscuration of Power: The complexity can be seen as a way of obscuring the power dynamics embedded within the AI system. Who decides the training data? What biases are present within that data? How are the algorithms designed, and whose values do they reflect? The network's density makes it hard to interrogate these questions, effectively masking the decision-making process.

- Instrumental Reason: The focus on optimization and "efficiency" in AI development (represented by the densely connected network striving for a single output) can be critiqued as an example of Max Horkheimer and Theodor Adorno’s “instrumental reason.” The technology is valued not for its inherent worth, but for its utility, potentially leading to a devaluation of human reason and agency.

- Reification: The network itself becomes a reified object - a thing that stands in for a complex process, potentially obscuring the social and political forces that shaped it.

Postmodernism

A postmodern reading highlights the dismantling of traditional notions of knowledge and representation.

- Deconstruction of Truth: The image challenges the idea of a singular, objective "truth." The output of the network isn't derived from a simple, logical process. It’s an emergent property of countless interconnected, probabilistic calculations. There is no straightforward path from input to output. The meaning of the output is constructed within the network and is contingent upon the training data and algorithmic parameters.

- Simulacra and Simulation: Jean Baudrillard's concept of simulacra is relevant. The network represents a simulation of intelligence, but the simulation has become so complex that it starts to resemble, and perhaps even replace, genuine understanding. The network operates on patterns and correlations, potentially without any grounding in “reality.”

- Fragmentation and Complexity: The network’s chaotic yet structured pattern mirrors the postmodern emphasis on fragmentation and the rejection of grand narratives. There's no single, overarching explanation for how the network operates; it's a collection of local interactions.

Foucauldian Genealogical Discourse Analysis

Foucault’s work explores how power operates through discourse and knowledge systems. Applying this lens to the image:

- Episteme: The image represents a shift in the episteme - the underlying set of assumptions and beliefs that shape knowledge. The dominance of neural networks reflects a shift toward viewing intelligence as computational and quantifiable.

- Power/Knowledge: The development and deployment of AI systems are not neutral. They are deeply intertwined with power relations. Those who control the data, the algorithms, and the computational resources wield significant power. The image, in its complexity, can be seen as a visualization of this power/knowledge nexus. The “truth” produced by the AI is shaped by the power structures that created it.

- Discipline and Surveillance: AI systems, particularly those used for data analysis and prediction, can be seen as instruments of discipline and surveillance. The network becomes a mechanism for categorizing and controlling individuals based on data.

Marxist Conflict Theory

From a Marxist perspective:

- Capital and Labor: AI development is heavily concentrated within large corporations (the "bourgeoisie"). The labor required to develop and maintain these systems is often undervalued and exploited. The image can be seen as representing the material forces of production – the technology itself – which is owned and controlled by a small elite.

- Alienation: The complexity of the network can contribute to the alienation of both the developers who struggle to understand it fully and the users who are subjected to its decisions without understanding how they are made.

- Ideology: The promotion of AI as a neutral and objective technology can be seen as an ideological justification for the existing power structures. The network's apparent rationality masks the underlying social and economic inequalities.

In Summary:

This image of a neural network is much more than just a technical diagram. It's a powerful visual metaphor for the complex social, political, and philosophical implications of Artificial Intelligence. The theoretical lenses outlined above reveal how AI systems challenge traditional notions of knowledge, power, and agency, and how they can reinforce existing inequalities. The "black box" nature of these systems, while enabling impressive capabilities, also demands critical scrutiny.

simple-description (llama3.2-vision_11b)

The image shows a neural network with nodes and connections, where each node represents a neuron and each connection represents a connection between neurons. The nodes are arranged in a specific pattern, with some nodes having multiple connections and others having none. The image is likely used to represent a complex AI model or algorithm.