First Seen

2026-04-02T05:28:21+00:00

simple-description (qwen3.5_2b-q8_0)

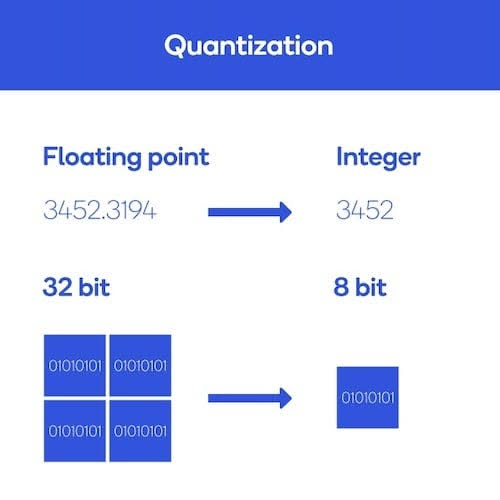

This diagram illustrates quantization, a technique prevalent in machine learning that transforms continuous float values into discrete integers for efficient storage and processing. By truncating the decimal portion of the number 3452.3194, the image shows how computers simplify data representation from a complex floating-point format down to a simple integer (3452). The blue squares with binary code represent this loss of precision, demonstrating the shift from a higher-precision bit format to a more compact 8-bit integer block.

detailed-analysis (gemma3_27b-it-q8_0)

Here's an analysis of the image, framed within the context of Artificial Intelligence, using the requested theoretical lenses where applicable.

Visual Description

The image illustrates the concept of "quantization" in the context of computing, specifically relevant to Artificial Intelligence models. It shows a transformation:

- Top: A floating-point number (3452.3194) is reduced to an integer (3452). This represents a loss of precision.

- Bottom: Four 32-bit blocks are reduced to a single 8-bit block. This visually demonstrates a reduction in memory usage and computational resources, again at the cost of data precision. The blocks of 010101 represent bits, the fundamental units of information.

- Arrows: Blue arrows signify the transition or conversion process.

- Title: The overall title is "Quantization," indicating the core topic.

This imagery is common in discussions about model optimization in machine learning.

Critical Theory

From a critical theory perspective, quantization reveals the inherent trade-offs involved in representing and processing information within AI systems. The move from a high-precision floating point to a lower-precision integer isn't merely a technical step. It highlights how choices made during model design and implementation reflect underlying assumptions about what is important and what can be sacrificed in the pursuit of efficiency.

This process is deeply tied to power dynamics. Those who decide on quantization strategies (e.g., engineers at large tech companies) are exercising power over how AI systems “see” and interact with the world. The loss of nuance due to quantization could systematically disadvantage certain groups if the original, more precise data held information crucial to accurate representation of their experiences (e.g., in facial recognition, medical diagnosis, or loan applications). The focus on efficiency and lower resource usage can be framed as an optimization for capital (reducing costs) at the potential expense of equity and fairness.

Marxist Conflict Theory

Within a Marxist framework, quantization can be understood as a manifestation of the constant drive for increased capital accumulation. The reduction in data precision (quantization) leads to:

- Reduced computational cost: Lower precision numbers require less memory and faster processing.

- Lower hardware requirements: Models can run on cheaper devices, broadening accessibility (or appearing to do so) but also allowing for greater market control by those who can afford the hardware and the optimized models.

- Increased Profit: All of the above translate into lower costs for the companies deploying AI, increasing their profit margins.

The conflict arises from the prioritization of profit over the potential consequences of reduced accuracy and fairness. The value is extracted through the efficient use of computational resources, but this efficiency is built upon the abstraction and simplification of data, potentially at the expense of real-world effects for marginalized groups. The “means of computation” are further concentrated in the hands of those with capital.

Postmodernism

Postmodern thought challenges the notion of objective reality and absolute truth. Quantization fits neatly into this framework because it demonstrates the constructed nature of information. The "real" value of 3452.3194 is never fully captured by 3452. It's a representation, a simulacrum, of that value. The 8-bit representation of the 32-bit block isn't an accurate copy, but a simplified version.

This echoes postmodern concepts like the "death of the author" (in this case, the original data source) and the prioritization of signs over signifieds. The focus shifts from the actual value to the code itself, and the way it is interpreted by the machine. There's no "original" or "true" representation, only increasingly abstracted levels of simulation.

Where the other requested frameworks don’t apply:

- Foucauldian Genealogical Discourse Analysis: While we can talk about the discourse around optimization in AI, quantization itself is a fairly concrete technical process, not a discursive formation shaping power/knowledge in the way Foucault would analyze.

- Queer Feminist Intersectional Analysis: While the potential for bias in AI systems (affected by quantization) can have disproportionate impacts on queer and/or marginalized groups (and thus could be analyzed through an intersectional lens), quantization itself isn’t inherently tied to gender, sexuality, or intersectionality in a way that demands this specific framework. Its effects on bias must be investigated after considering its technical implications.

In conclusion, this seemingly simple image of quantization is a microcosm of the larger ethical, political, and philosophical debates surrounding the development and deployment of Artificial Intelligence. It demonstrates how technical choices can have profound implications for power, equity, and our understanding of reality itself.

simple-description (llama3.2-vision_11b)

The meme is a play on the concept of "quantization" in artificial intelligence (AI) and machine learning. The image shows a number 3452.3194 being converted to an integer 3452, and then further quantized to an 8-bit representation of 0x 01010101. The joke is that this 8-bit representation is actually a representation of the original number 3452.3194, not just any integer. The humor comes from the idea that even an 8-bit representation can be used to represent a complex number like 3452.3194, making it a clever and accurate representation of the original number. The meme pokes fun at the idea that even simple methods like 8-bit representation can be used to represent complex data, while also highlighting the limits of this method.