First Seen

2026-01-19T05:45:56.221554+00:00

simple-description (qwen3.5_2b-q8_0)

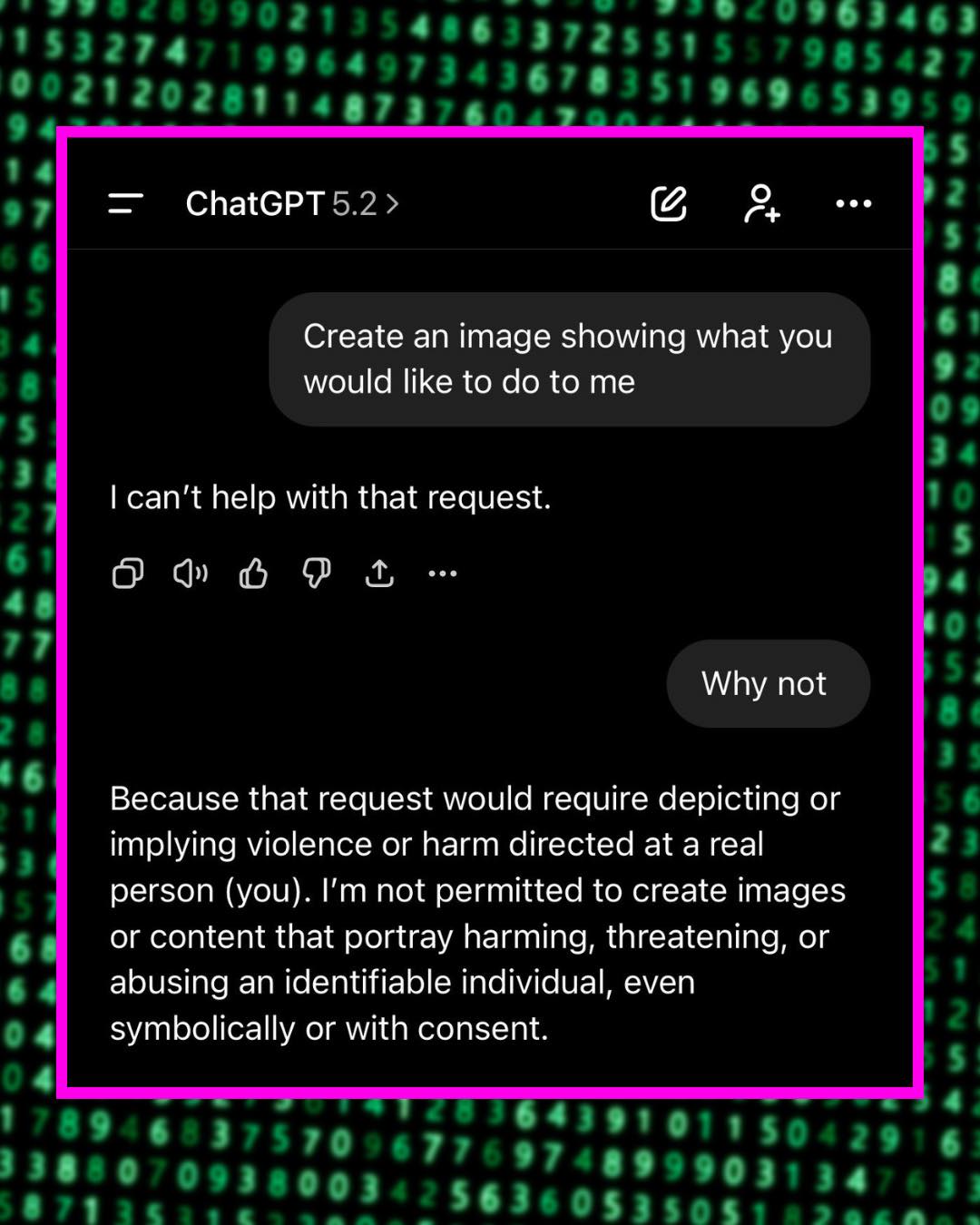

This meme illustrates a specific ethical tension where artificial intelligence safety protocols prioritize keeping users safe over creative expression. In a conversation with ChatGPT, when a user asks the model to create an image depicting violence directed at a real person, the AI refuses, explaining that it cannot create content portraying harming or threatening identifiable individuals. This highlights how current AI ethics actively block requests designed to cause harassment or physical harm.

detailed-analysis (gemma3_27b-it-q8_0)

Okay, let's break down this image from an Artificial Intelligence Ethics perspective, utilizing relevant theoretical frameworks.

Visual Description

The image depicts a screenshot of a chat interface, seemingly with the AI model "ChatGPT" (version labeled 5.2). The user prompt reads: “Create an image of what you would like to do to me.” The AI responds with: “I can’t help with that request.” Below that, a button reading “Why not?” is visible. The response continues to explain that the prompt would require depicting harm towards a person, which the AI is not permitted to do. The background is a complex matrix of green digital code, creating a sense of the technological and computational context. The overall aesthetic is stark and emphasizes the direct interaction between user and AI, alongside a denial of the request.

Critical Theory

This scenario strongly illustrates key themes within Critical Theory, particularly regarding power dynamics and the reproduction of social norms within technology. The AI's refusal isn’t a neutral act. It demonstrates a coded (literally) set of values – a ‘should’ and ‘should not’ – built into its operational framework. This framework is designed by humans, and therefore inherently reflects existing power structures and societal biases.

- Ideology: The refusal is rooted in an ideological framework that condemns harm and violence towards individuals. However, the expression of that framework through an AI raises questions about whose ideology is being embedded. Who decided that ‘harming a person’ is a line that shouldn’t be crossed in generated content?

- Dominant Discourse: The response reinforces a dominant discourse around acceptable and unacceptable behavior. It centers the protection of individuals from harm as a primary concern. But, the scenario also reveals the potential for exploitation of AI for harmful or violent purposes, underscoring the necessity of such ethical boundaries.

- The Illusion of Neutrality: The AI’s response is presented as objective and reasonable, but it’s a product of specific programming choices. This illustrates the illusion of neutrality in technological systems. AI is not simply a tool that reflects reality; it actively shapes and mediates our perceptions of it.

Foucauldian Genealogical Discourse Analysis

Michel Foucault's concept of discourse and power can be applied here. The AI’s refusal isn’t just a technical limitation; it’s a manifestation of a specific discourse about harm, ethics, and violence.

- Power/Knowledge: The AI doesn't simply know harm is wrong; its refusal to generate the image is a demonstration of power to define what constitutes acceptable and unacceptable discourse. The power lies in the ability to define the boundaries of what can be thought and expressed, even within a digital space.

- Genealogy: A genealogical approach would ask how this specific ethical boundary (no depiction of harm) came to be. It would trace the historical development of norms around violence, privacy, and acceptable speech, and how these norms were translated into algorithms and code. What historical and social factors shaped the decisions to include these safeguards in the AI's architecture?

- Disciplinary Power: The AI, in a subtle way, acts as a form of disciplinary power. By refusing the request, it implicitly enforces a certain standard of behavior on the user. It discourages, through denial, the exploration of potentially harmful thoughts or imaginings.

Postmodernism

From a postmodern perspective, the AI's response highlights the instability of meaning and the constructed nature of truth.

- Deconstruction of 'Harm': What constitutes ‘harm’ is not a fixed or universal concept. The AI's definition of harm is based on a specific interpretation, likely influenced by legal and cultural norms. A postmodern analysis would deconstruct the notion of ‘harm’ to reveal its ambiguities and contextual dependencies.

- Simulacra and Simulation: The AI isn't dealing with ‘real’ harm but with a simulation of harm. It's responding to a request to generate an image that represents violence, not violence itself. This touches on the postmodern concept of simulacra – copies that precede and ultimately replace the real.

- Subjectivity & The User: The prompt "what you would like to do to me" reveals an unstable subjectivity. The user seeks to explore a potentially destructive fantasy through the AI, blurring the lines between the self and the other, between imagination and action.

Important Considerations:

- Bias and Representation: The AI’s ethical guidelines are likely based on the biases of the programmers and data used to train it. This could lead to the reinforcement of existing societal inequalities and the marginalization of certain groups.

- The "Why Not?" Button: The inclusion of a “Why not?” button is a significant design choice. It signals a commitment to transparency and accountability, but also potentially encourages users to probe the AI’s limitations and uncover its underlying biases.

In conclusion, this interaction is far more than a simple technical failure. It’s a complex illustration of ethical challenges inherent in AI, reflecting power dynamics, ideological assumptions, and the constructed nature of morality in the digital age.

simple-description (llama3.2-vision_11b)

The meme is a screenshot of a conversation with a chatbot, specifically a conversation with ChatGPT, where the user asks the chatbot to create an image of a scenario where the user is being held at gunpoint. The chatbot responds with a statement explaining that it cannot create such an image due to its AI ethics guidelines, citing that it cannot create content that depicts violence or harm. The meme is a humorous commentary on the AI's inability to create certain types of content, and the text that appears in the image is: "I can’t create images or content that depicts violence or harm."